Attempts to normalise ever more intrusive ‘SMART’ technologies have taken a concerning turn with Microsoft’s recent announcement of the Recall feature planned for its Copilot+ Windows 11 PC range. Whilst not marketed as a surveillance capability, a system which automatically takes a screenshot of the user’s activity every few seconds, and saves it as a permanent record, opens up worrying possibilities.

Microsoft’s argument for this capability is that it avoids people having to remember where they put a file, or which webpages they were viewing, and by scanning these stored images with machine learning algorithms, and by utilising the capabilities of Large Language Models, Recall can help users to ‘recall’ those things which most people can remember whilst relying solely on that hardware which resides inside their own skulls. The intention is that users can ask the AI running upon their computer a question using natural conversational language, and it can return to them an answer based upon, or including a record of, their earlier activity.

Formerly ‘surveillance’ was regarded as a ‘dirty word’ – to paraphrase General Melchett from Blackadder, it would perhaps belong somewhere close to ‘crevice’ – and Government agencies went to great lengths to reduce the impression of intrusiveness by describing some of their programmes as collecting ‘only’ metadata in an attempt to allay the concerns of the, rightly angry, public. But in the wake of the catastrophic over-reaction to Covid, surveillance, by which I mean surveillance of people not just surveillance as a term for the observing and reporting of new symptoms and conditions in a medical context, has been brought brazenly to the forefront. The culture of the West, or at the very least the impression of the West’s culture as perceived by Microsoft’s executives and marketers, has now reached the point where those executives feel emboldened enough to propose one of the most invasive forms of data collection imaginable, the constant recording of all your computer screen activity.

To be clear, at present Recall is designed to store this data locally on your device, not in Microsoft’s cloud, so this feature, working precisely as it is described to, is not automatically a surveillance tool. Also, Microsoft has said that the AI being trained with your screenshots operates entirely locally, again reducing possibilities for cloud-related risks. Nonetheless the Recall feature collects together a record of all your interactions with the computer, that is to say not just the files you create and the information you deliberately choose to store but absolutely everything you do, in one place within your user account’s AppData folder. Recall’s “stored data represents a new vector of attack for cybercriminals and a new privacy worry for shared computers”, said Steve Teixeira, Chief Product Officer at Mozilla, to tech news website the Register. Recall is acting, in effect then, much like a keylogger. Formerly even accidental bugs which led to local keylogging were considered scandalous. Now Microsoft markets a close equivalent of keylogging as a positive feature.

Although Copilot+ PCs will by default have Microsoft’s proprietary BitLocker encryption on their hard-drive, which would make it nearly impossible for a thief stealing the computer hardware to gain access to the Recall archive of private information (‘nearly’ because if there is a backdoor in BitLocker, then we must remember that golden keys for backdoors, or golden master keys for any other purposes, will inevitably eventually leak out and then be exploitable by criminals), this encryption of the full volumes on the disk does nothing to protect against Recall screenshot data being exfiltrated by malware, which would operate within the already-running operating system. Security researcher Alexander Hagenah demonstrated in the last few days that the database of text information gathered from the screenshots is stored without any further encryption beyond BitLocker’s encryption of the disk volume. And whether BitLocker would be secure against a government level adversary with its hands on the hardware is uncertain. Not being an open-source cryptographic product, we have only Microsoft’s assurances of its security, and its history of having centralised stores of keys, which could perhaps be subjected to governmental demands, may not bode too well.

More concerns are raised by the fact that, since the release of Windows 10, updates in most versions of Microsoft’s operating systems have been automatic and mandated, with no setting by which users may block unwelcome ones. Even the semi-mandated 2015-2016 GWX.exe rollout managed to cause so much chaos as to endanger an anti-poaching conservation group in the Central African Republic. Therefore were Microsoft to, in future, roll out an update which changed Recall’s behaviour to store screenshot data in the cloud instead of locally, it could happen without the user’s knowledge and without any opportunities for the user to prevent it. Given that most of the Copilot+ offerings have 1TB drives, an archive of screenshots taken every few seconds could fill the disk quickly. In a raw data scenario, screenshot images of around 750kB taken every five seconds will fill a whole terabyte disk in 75 days of accumulated usage time; the actual time would be shorter as a proportion of the disk’s space will already be taken up by both the operating system itself and the user’s documents. Although there are indications that the stored data can compress very efficiently, massively reducing the disk space taken up, it is still not inconceivable that Microsoft might, at some future point, ‘helpfully’ offer to transfer Recall screenshots to its cloud servers and thereby free up this disk space for you. If this were to happen then it could be all your historic private screenshots, not just those taken after the change, which would be copied to the cloud. If copied to the cloud in this manner, there would then be no obvious technical obstacle to Microsoft data-mining the Recall information for any purposes, if they wished to do so. However, in scenarios where Microsoft never makes any attempt to transfer Recall screenshot data to the cloud, the risks inherent in the existence of such a rich store of sensitive information all still apply. To give a few examples:

- If you’re the boss of a company and someone untrustworthy gets a short period of access to your travel laptop whilst it is logged in and your back is turned, they can get access not only to what is actually saved upon the laptop, but screen records of everything you’ve ever done on it. That ‘evil maid’ would be able to view the Recall screenshots, or the SQLite database compiled from them, even if you’ve been careful to store only a few unimportant documents on the travel laptop itself, and kept all your sensitive information safely stored only on your company server which you connect to, from the travel laptop, via a Windows equivalent of ssh, such as Remote Desktop Protocol. This scenario also applies to information stealing malware infecting the laptop as well as physically present attackers, which the BBC failed to recognise when credulously quoting Microsoft’s press statements in their reporting.

- If you’re a journalist in a repressive regime which is not averse to employing ‘rubber hose’ cryptanalysis, then a successful interrogator can gain access to not only the material you’re actively working on for your latest story, but an archive detailing all the contact details of all the sources you’ve ever communicated with via your Copilot+ computer, as well as passwords you used to log in to any secured messaging websites with which you communicated with them. In this scenario your adversaries would then have not only all the details of those you swore to protect, but probably sufficient information to use to then communicate with those sources whilst convincingly impersonating you.

- If you’re a spouse who shares a computer with an abusive partner, ephemeral information, such as private browser searches for domestic abuse charities, will, with Recall, now be logged forever for your partner to review and judge, after trivially calling it up via a natural language query.

- And if you’re an organisation processing confidential customer data, you could also find Recall a nasty surprise. Especially in scenarios where a worker, unaware of Recall’s presence on their own Copilot+ computer, remotely logs in to a workplace website to view documents, whilst Recall saves screenshots of these documents to the worker’s own local hard-drive. Until now large data breaches have been a result of cybercriminals exploiting software vulnerabilities within a large organisation’s main servers or network as a means of gaining access to its data. With Microsoft Recall, breaches could occur whenever any employee’s personal machine was compromised.

And the scenarios above all occur under the assumption that Recall is behaving exactly as described. In the event of software bugs, and virtually no large software development project (however well funded or safety critical) has ever been fully bug-free, a far wider range of problematic scenarios for data leakage could occur.

Recall will only ship with the new Copilot+ PC range which include Neural Processing Unit hardware optimised for machine learning tasks, but it will be turned on by default and can only be turned off after initial setup of the out-of-the-box PC has been performed. Less technically inclined buyers of these flashy new machines may not realise it is present, or forget to turn it off once system setup is complete. Even if they are aware of it they may consider that allowing an AI system to scan all their private information is “like undressing in front of a dog or cat, it gives you pause the first time and then you forget about it”, a sentiment repeated by Big Tech executives but perhaps first made in the 1990 Dan Simmons novel The Fall of Hyperion. Of-course with a dog or cat there is no possibility that malware could persuade it to later reveal what it had observed.

It has also already been demonstrated that it is technically possible for Recall to be enabled by technically capable users who wish to test it on non-Copilot+ hardware, so it is not technically impossible that Microsoft may in future release Recall to Windows 11 on other hardware, if it is determined enough to expand Recall’s user base that it would be willing to sacrifice its ability to use Recall as a unique selling point for the new Copilot+ hardware.

Perhaps one of the clearest indications of the regard Microsoft places upon the privacy of its users is that whilst it has designed Recall to automatically avoid recording DRM’d content, that is to say media files with software enforced copyright restrictions built in to them, it has not enabled any such automatic protection for the user’s own information. Recall is perfectly happy to take screenshots of all passwords you enter into plain-text website fields (not starred-out), any financial documents you view and any sensitive chats within messenger programs. Only if you use Microsoft’s Edge browser do you get detailed options to have Recall deactivated when you visit particular websites you have blacklisted for it, an online bank’s login page for example. And whilst Microsoft advertises a capability by which the user can delete specific screenshots after the fact, it could be the case that Recall’s Large Language Model may have already trained itself upon these before they are retroactively deleted. In which case it is not inconceivable that the correct natural language prompt made later may cause Recall to spew out a text representation of information in the screenshots that the user deleted. Any hopes for being able to remove specific information from a machine learning system after it has learnt it are still very much in the early stages of research.

Given Microsoft’s heavily corporate language used in the context of its attitude to the ‘responsible’ use of AI technologies one also wonders how helpful Recall could ever be, even if it were not for its privacy risks. There are scenarios where banning certain applications of AI make sense, particularly in regards to facial recognition and technologies which can serve as building blocks for social credit scoring systems (although often these are all too worryingly achievable with largely classical, mostly non-AI, algorithms). However, the more corporate and government friendly parts of the AI safety movement, parts for which one gets the sense that they may have emerged from a similar environment to the concept of ‘Disinformation Studies’ and the ever tightening ties between Big Tech and governments which that represents, seem more interested in finding ways to design Large Language Models so they will give the response desired by self-appointed authorities on any given matter, rather than the response the user actually needs. Edward Snowden suggests the scenario, “Imagine you look up a recipe on Google, and instead of providing results, it lectures you on the ‘dangers of cooking’ and sends you to a restaurant” as the sort of behaviour a public-private partnership-approved ‘safe’ AI may exhibit, and warns that this kind of safety filtering is a threat to general computation (‘Wars on General Purpose Computing’ is a topic I touched upon in an earlier Daily Sceptic article). The Goody-2 AI chatbot demonstrates an exaggerated version of one such ‘safe’ AI via an amusing interactive demonstration.

The eventual endpoint of concepts like Recall to embed AI in to personal devices could result in a situation in which your own property, the very hardware in your hands, decides to ignore your orders in favour of politically motivated goals. A world in which an AI on your own device decides that a given opinion is inappropriate to post online is not a technically impossible world, and, given the inaccuracy AI often exhibits, even opinions which wouldn’t offend the most censorious of ‘snowflakes’ would be arbitrarily blocked. It is also conceivable that with a system like Recall already present, tech companies would have very few arguments available with which to oppose governmental efforts to impose Client-Side Scanning on devices. With an AI scanning system already present, overbearing governments would have a much easier time pushing for it to detect and report, initially, the most grossly illegal and immoral of content, but then slowly broadening the scope until any materials critical of governmental policies, or even the most minor breaches of increasingly ridiculous legislation, would be flagged. Furthermore, not only could personal computers with embedded AI actively work to assist censorship, but the mere presence of that AI, even if the probability of its recordings being exported to the cloud was only small, could cause a chilling effect on free speech, whereby people aware they are being observed under Panopticon principles begin to self-censor. Surveillance is indeed the handmaiden of the censorship.

I cannot be the only person for whom the thought of one’s own electronic property working against one feels a most ghastly affront. Perhaps this is because as human beings, a species whose most notable characteristic is surely our use of tools, although we are not the only species to do so, we have an evolutionary predisposition to perceive a bond between man and machine – an evolutionary predisposition to perceive tools as our faithful allies which support us in our lives. Technologies which instead offer their primary loyalty to a remote institution strike at the very heart of this; to depend upon them is to feel as if you are employing an assistant whom you know to be a double agent who may switch his allegiance at any time.

Isaac Asimov’s numerous novels touched upon this concept when describing why the ‘positronic’ robots of his chronology had a First Law not to harm (or by inaction allow harm to come to) the specific human(s) in front of them. But most of his works dared not to contemplate a Zeroth Law to ‘protect humanity’. Whilst, for Asimov’s incredibly intelligent robots, he made following human instructions only the Second Law, applying only when obeying the user’s orders would not violate his First Law, in the absence of science-fictionally high levels of intelligence within a tool the only time that a First Law of ‘Do no harm’ and a Second Law of ‘Do as your user wishes’ come in to conflict in a way the tool can recognise is where you have situations clustered around the theme of needing something analogous to interlocks with which to stand between fragile human flesh and heavy, fast-moving actuators. Either way, the tool’s ‘allegiance’ is to your immediate instructions, or to the interlock that stands in for your implicit instruction of “Please don’t kill me if I cock up in a moment of carelessness”. That lack of a Zeroth Law protecting ‘humanity’ is noteworthy. A tool and its user have a clear and obvious relationship, and the consequences befalling the user if this breaks down can be easily deduced for a given situation. Humanity, though, is often much more of an abstraction, and whether establishment opinions on what is best for humanity really do correlate to humanity’s best interests is definitely not a judgement which should be left to a tool which may well have been programmed on behalf of that establishment. Any scenario in which personal devices of the future feature an intrusive AI acting according to what its manufacturers believe to be higher objectives than the user’s instructions, is one in which the very concepts of ownership and property are undermined. Such undermining of ownership is nothing new to Big Tech. But there is something increasingly blatant about the way in which users are denied proper ownership – that is to say the ability to use it exactly as one wishes and without dependency on a remote service – of the very hardware in their hands. It is easy to see how a situation in which you do not truly own your own devices is one in which Big Tech corporations feel emboldened to push for un-asked for features such as Recall as their vision of the future.

In keeping with the spirit of criticising desktop operating system vendors in an equal opportunities manner I will note that Microsoft’s Recall is not unique among un-asked for features proposed or rolled out; Apple has not exactly showered itself in glory either. Which leaves us with a situation where if one wishes to maintain true ownership over your own computer then open-source operating systems within the GNU/Linux ecosystem offer the best option. Where proprietary operating systems continually change to keep pace with their executives’ ideas of what is fashionable and modern, many Linux distributions have remained extremely stable, at least in terms of the underlying capabilities if not the out-of-the-box graphical interfaces, for many years. Much as marketers may not like it, that ancient bond between man and machine and the sense of ownership it brings is surely enhanced, not diminished, when the tool you use this year keeps working exactly as it did last year. In particular, distributions such as Linux Mint remain easy for a novice to understand while exposing to the user all the settings he is ever likely to need such that he can customise the system to his needs within a menu designed to put the user back in control, rather than nudge him into ‘dark-patterns’. Whilst Linux native applications (for things such as word processing, image editing, graphics production, CAD, statistics, or anything else you do on a desktop or laptop) are not always up to scratch with their equivalents on Windows or Mac, between the Wine project and the use of a virtual machine in which Windows can be run as a Guest, there is usually a means available to use Linux as one’s daily operating system whilst sticking with the existing applications with which one is familiar. Tutorials for beginners migrating to Linux operating systems are numerous, and test driving Linux with a persistent install to a USB memory stick or within a virtual machine are options too.

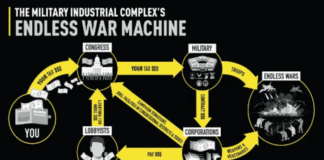

In conclusion then, Microsoft’s Recall feature is concerning not only in and of itself, but also in what it reveals about the way in which Big Tech executives and marketing departments regard the computer-using public. Just like Sir Humphrey from Yes Minister, they consider ever greater micromanagement and centralisation (albeit in terms of who gets to make the ‘root’ or ‘administrator’ level decisions rather than the physical architecture of networks) to be the solution to each and every problem. Unlike Sir Humphrey they can automate that micromanagement to avoid needing vast numbers of clerical staff. And nowadays, when some technology related crisis such as a major data breach or cyberattack makes the news, rather than standing against political demands for more intrusive controls, most Big Tech executives now cosy up to governments in the hope that surveillance, censorship and centralisation programmes will generate contracts for the programs their organisations have written. Do not fall for this ‘logic’. One of the clearest lessons to be learnt from the criticisms of Recall is that gathering, even locally, large quantities of private data is risky. Any scenarios in which gathered data could become further centralised within cloud infrastructure create greater risks still. Governments calling for greater surveillance to fight, among other things, cybercrime, would by concentrating private information in centralised storage simply create greater opportunities for worse cybercrime, including by state-sponsored adversaries.

Whether presently tense geopolitics are a temporary blip, a state of affairs entirely commensurate with history up until this point, or an indication of a more conflict-ridden world emerging, a resilient country will be one which does not weaken itself in pursuit of central planning. A resilient country will be one which resists – whether by an outbreak of sense within its leadership or by public noncompliance – attempts to replace tried and tested analogue systems, such as cash payments, with centralised digitised alternatives. Make no mistake, advanced technologies can be a good thing, but they should be applied where they are needed, such as in pioneering nuclear fusion power which can free us from needing to import our energy, or in rebuilding our industrial and manufacturing capacity without requiring a massive workforce to run it. They, and particularly such heavily hyped technologies as Machine Learning, should not be applied to replace things which already work well. A resilient country will be one where each person’s trust in the sources of information they encounter will be informed by a well honed capacity for reasoning and debate, not one where a single fount of truth serves as a single point of failure. And a resilient country will recognise that using Artificial Intelligence as a substitute for having tools at our fingertips over which we have meaningful and independent control is the height of Real Stupidity.

Dr. R P completed a robotics PhD during the global over-reaction to Covid. He spends his time with one eye on an oscilloscope, one hand on a soldering iron and one ear waiting for the latest bad news. He composed this article on a Linux computer.

From dailysceptic.org

Disclaimer: We at Prepare for Change (PFC) bring you information that is not offered by the mainstream news, and therefore may seem controversial. The opinions, views, statements, and/or information we present are not necessarily promoted, endorsed, espoused, or agreed to by Prepare for Change, its leadership Council, members, those who work with PFC, or those who read its content. However, they are hopefully provocative. Please use discernment! Use logical thinking, your own intuition and your own connection with Source, Spirit and Natural Laws to help you determine what is true and what is not. By sharing information and seeding dialogue, it is our goal to raise consciousness and awareness of higher truths to free us from enslavement of the matrix in this material realm.

EN

EN FR

FR